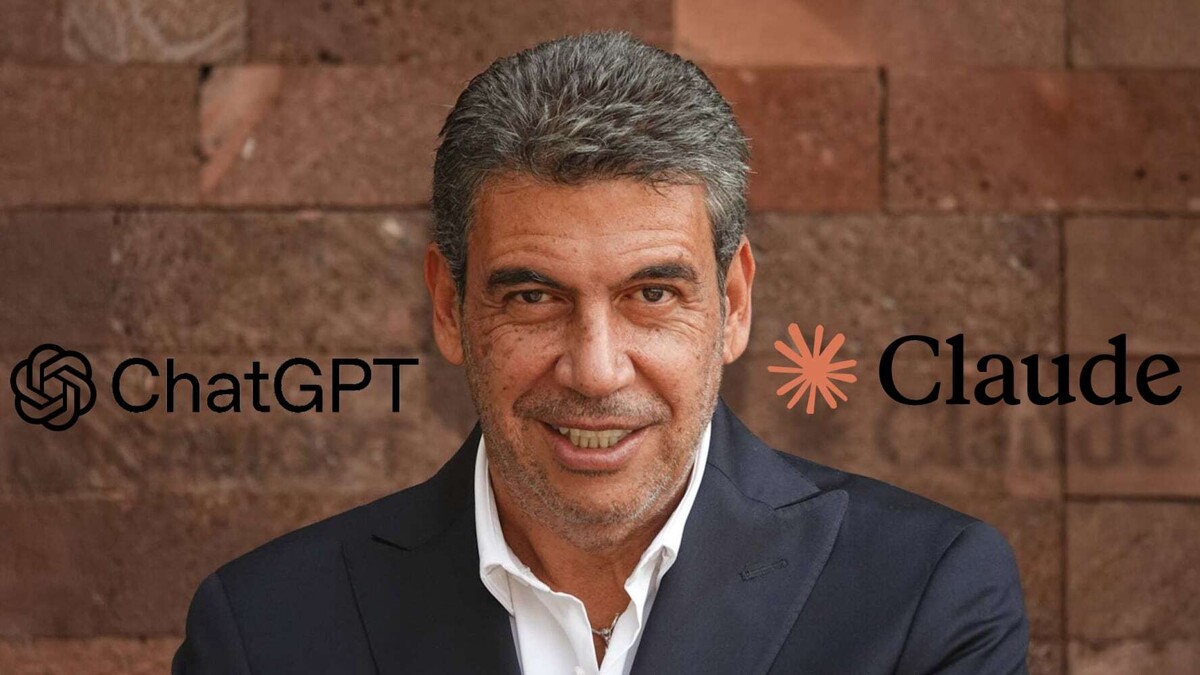

Claude AI is a generative artificial intelligence system developed by Anthropic, founded in 2021 by former OpenAI employees. Since its launch, the system has evolved with different versions focused on tasks such as writing, document analysis, programming, and conversational assistance. Unlike other models, Claude AI was designed with a focus on what the company calls 'constitutional AI,' a method that aims to align the system's responses with defined ethical principles, reducing the risks of harmful or biased content. Among its capabilities, Claude can process large volumes of text, summarize extensive documents, generate written content, and assist with complex tasks. Tired of using ChatGPT and don't know what other AI to try? Entrepreneur Arturo Elías Ayub has a recommendation. One of its standout features is the breadth of context it can handle compared to other models. As for differences with ChatGPT, users have noted variations in response style, analytical depth, and handling of long instructions. The América Móvil and Telmex Foundation executive recently shared that he decided to switch his AI tool. As he explained, in recent days he stopped using ChatGPT and now bets on Claude AI, a platform that, he says, he likes much more than the chatbot developed by OpenAI and that has become popular for its utility in various fields. 'I switched from ChatGPT to Claude, and I'm not kidding, I'm really liking it a lot more,' affirmed the former shark from Shark Tank México in a video posted on his TikTok account. Elías Ayub pointed out that switching platforms does not imply losing customization or starting from scratch, as user information can be transferred from one tool to another. This point served as the basis for the Trump administration to push for a ban on cutting ties with the company, amid disagreements over how its artificial intelligence could be used. Anthropic responded on two legal fronts. Days earlier, it was reported that internal files had been accessible in a public system, which exposed the company's technical information. It also stated that it sought guarantees that its AI would not be used for mass surveillance or in autonomous weapons systems, conditions that were rejected by authorities on national security grounds. The company has also faced internal incidents. According to him, this process can be done directly from the apps themselves and takes only a few minutes. 'And if you're like me and said, 'but ChatGPT already knows everything about me and so it answers me personally, ask the same ChatGPT or Claude (...) or that you pass from ChatGPT everything it knows about you to Claude and that's it.' In this process, a judge temporarily blocked the measure while the litigation continues, which puts any definitive restriction on its technology on hold. In its arguments, Anthropic maintains that the government's decision is arbitrary and could cause it billions of dollars in losses, in addition to setting a precedent for other federal contractors. 'It's super easy,' he said. On one hand, it requested an appeals court in Washington to temporarily suspend the Pentagon's declaration; however, a panel of judges rejected that request, though it agreed to review the case on an accelerated basis and scheduled a hearing for May. On the other hand, the company filed a lawsuit in a federal court in California to halt the ban. 'I saw it in a video on Insta and I did it in three minutes,' he said. Its main focus is on security, interpretability, and control of language models. The name 'Claude' references Claude Shannon, considered one of the fathers of information theory. An executive recently acknowledged the accidental leak of code related to its Claude system, attributed to errors in its launch processes. While ChatGPT stands out for its integration across multiple platforms and ecosystems, Claude has gained attention for its focus on security and large-scale text processing. Claude can now build interactive charts and diagrams, directly in the chat. Available today in beta on all plans, including free. Try it out: [link removed]. The conflict between Anthropic and the U.S. government. Anthropic, the developer of Claude AI, is currently facing a legal conflict with the United States government after refusing to allow its technology to be used for military purposes by the Pentagon. The origin of the dispute is a declaration from the Department of Defense, which classified the company as a risk to the U.S. supply chain.